The Missing Argument: Motivation and Artificial Intelligence

A few days ago, I wrote about Nick Bostrom’s theory of SuperIntelligence as an answer to the debate between Elon Musk and Mark Zuckerberg about the threat that artificial intelligence(AI) represents to humanity. Certainly, the dangerous and even apocalyptic versions of AI are dominating the headlines but, almost without exception, the debate has stayed on a very abstract surface. Today, I would like to explore a challenge that not only will be at the core of the next generation of AI technology but one that is also essential to any theory of AI taking over the world. We are talking about motivation.

Goal setting and motivation are key characteristics of human’s decision making process which are still missing from AI agents. Without a dynamic motivation system it is unlikely that AI systems will be able to cause any harm to humanity on-purpose. Additionally, figuring out the mechanics of motivation is also essential to build more advanced intelligent AI agents. Until this point, motivation in AI agents has been expressed in the form of very constrained goal setting mechanisms such as maximizing utility functions against a well known set of hypotheses. That type of goal is certainly simple to express in code but also fundamentally different to how humans setup goals. Let’s review a few thoughts about topic to dig deeper into the argument of motivation and AI agents.

1 — Discrete vs. Generic Goals

the human brain has a unique capacity for formulating very abstract goals and dynamically break them down into more constrained and even tactical objectives. Today, it is unconceivable to think about AI agents operating using generic goals such as “maximizing return to shareholders” or “being happier”. However, I think more generic, although not completely abstract motivations will be part of the next generation of AI agents. Keep in mind that generic motivation is more conducive to autonomous bad behavior by AI agents.

2 — Passive vs.. Active Motivation

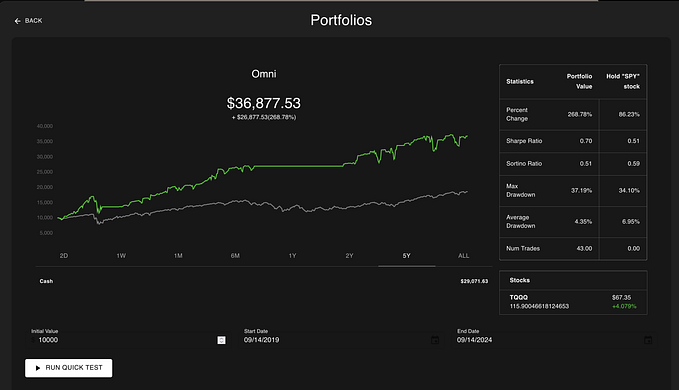

Most of the motivation modeling in AI agents is based on passive goals such as “find patterns in X dataset” or “predict the value of X attribute”. Those type of goals don’t necessarily require any actions taken as part of the models. Active motivation system are common on scenarios such as quantities trading in which AI agents take actions (buy or sell securities) based on their ultimate goals. Active motivation systems are obviously riskier in AI systems.

3 — Building a Dynamic Motivation System

Not to sound too rhetorical but part of the trick to solve goal-setting in AI agents is to build motivation systems that can change and adapt over time. Reinforcement learning should play a key role to enable that new type of dynamic motivation system.

4 — The Intelligence-Motivation Orthogonality Thesis

One of the most intriguing and certainly controversial theories about motivation in AI agents in known as the Orthogonality Thesis.. In essence the Intelligence-Motivation Orthogonality Thesis states that intelligence and motivation are essentially independent. More or less any level of intelligence can be combines with more or less any final goal. In my opinion, the Intelligence-Motivation Orthogonality Thesis is more relevant in strong AI (rather than weak AI) systems and is a philosophical argument in favor of caution when comes to generally intelligent AI agents.

5 — The Instrumental Convergence Thesis

Another interesting theory about the relationship between motivation and AI states that highly intelligence agents with final goals can pursue intermediate goals based on instrumental reasons. For instance, a trading algorithm focused on defense stocks can set get really smart about weapon manufacturing because the knowledge directly contributes to its final goal. Again, this argument is more applicable to strong AI systems.

These are some of the most common arguments to consider when thinking about the role of motivation in AI agents. Motivation is certainly one of the aspects that will be at the core of the AI debate in the not so distant future :)